▲ HIGH ATTENTION

You are an expert travel planner. I need a 10-day itinerary for Japan covering Tokyo, Kyoto, and Osaka.

The trip starts on March 15. We are two adults traveling on a moderate budget of about $200/day.

We love street food, temples, and nature walks. We prefer trains over buses for intercity travel.

Day 1 should include Shinjuku Gyoen and Meiji Shrine with dinner in Omoide Yokocho for yakitori.

For Day 2, focus on Akihabara in the morning and Asakusa with Senso-ji Temple in the afternoon.

On Day 3, take the Shinkansen to Kyoto. Hotel should be near Kyoto Station for easy access to buses.

Day 4 should cover Fushimi Inari early morning and Kinkaku-ji in the afternoon. Budget about ¥3000 for lunch.

Day 5 could be Arashiyama bamboo grove and monkey park. Consider renting bikes for the area near the river.

IMPORTANT: My partner is allergic to shellfish. Please make sure all restaurant recommendations account for this.

Day 6 in Nara to see the deer park and Todai-ji. This can be a half-day trip returning to Kyoto by evening.

Day 7 is the transfer to Osaka via local train. Check into hotel near Namba for the street food scene.

▼ LOW ATTENTION — information here gets overlooked ▼

Day 8 should feature Osaka Castle in the morning and Dotonbori in the evening for takoyaki and okonomiyaki.

Day 9 is a flex day. Options: day trip to Himeji Castle or explore Shinsekai and Tsutenkaku Tower area.

Day 10 is departure from KIX. Allow 2 hours for airport transfer. Morning could include last-minute shopping.

Keep a small budget reserve for souvenirs — about ¥10,000. Pack light layers for March weather variability.

We'll need pocket wifi or eSIM. Prefer an eSIM that covers the full 10 days with unlimited data if possible.

Please output the itinerary as a day-by-day table with columns for Date, Location, Morning, Afternoon, Evening.

Include estimated costs per day in USD. Flag any days where we might exceed the $200 budget.

Format the response in markdown. Use bold for must-see attractions and italic for optional activities.

▲ HIGH ATTENTION

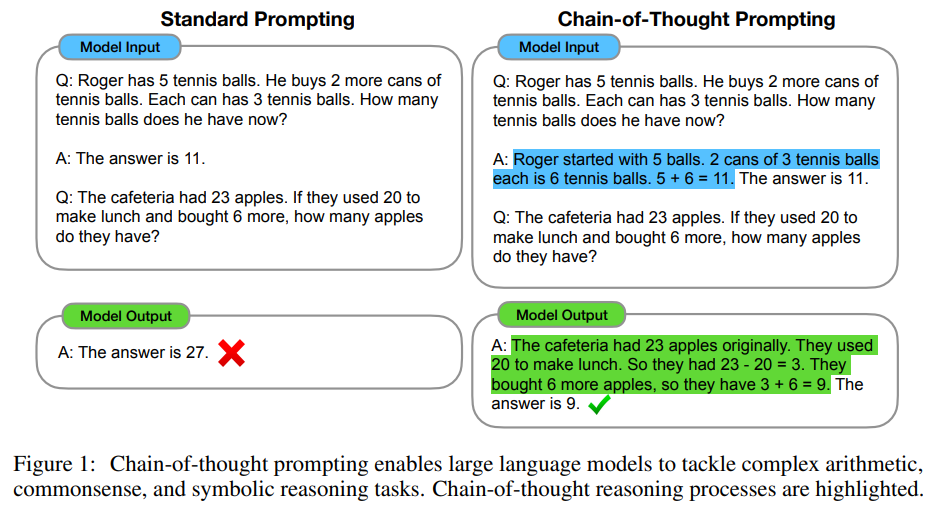

Models pay the most attention to the beginning and end of the context window.

Information buried in the middle gets overlooked — even critical details like "my partner is allergic to shellfish."

Builder's rule: Put your most important context first and last. Never bury key instructions in the middle of a long prompt.

Keep this in mind today: Every prompting technique we learn builds on understanding where the model is — and isn't — paying attention.